What students can learn from DeepSeek’s build process

Most of us have heard the adage work smart, not hard. Since R-1 was built to outperform models programmed using resources and power far higher than was available to its makers, DeepSeek found a way to work smart.

It’s been a little over two years since OpenAI blew the world’s collective mind with ChatGPT, almost instantaneously establishing it as the gold standard within modern AI systems. Since then, all the big tech players in the world have been locked into a high-stakes, high-pressure race to rapidly launch and refine their own AI models to mimic human-like reasoning and conversational patterns while providing information or performing tasks assigned to them.

Naturally, this requires training these models on unimaginably vast volumes of data (and financial resources) so they can, over time, offer the most accurate and contextual answers to the questions posed to them by their users.

In just two years, for many of us, it fundamentally changed how we look things up on the Internet. Many of us—teachers, entrepreneurs, and students—started ‘GPTing’ our preoccupations before ‘Googling’ them.

While most of us are AI immigrants (we changed our search behaviour), some of the younger students are AI natives (they started their search journeys with AI instead of sifting through multiple sources of information and making decisions about which source they found most dependable).

I’ve wondered, with not insignificant trepidation, about the consequences of AI hallucinations on this new generation of AI natives. You can train your own AI tool to encourage Socratic thinking in your students, but what do you do if the foundation model it was built on itself hallucinated because it simply hadn’t had enough time and training to work out its more problematic biases and flaws?

I’m sure it’s a question that keeps my fellow edtech founders and educationists awake at night as well.

I’ll admit that all of us bought into the enormous cost, speed, and time narrative within the building of AI foundation models. Until last month, when Chinese quantitative hedge fund High Flyer released R-1—its latest AI reasoning model from its AI research lab DeepSeek—its performance was found to be at par with OpenAI’s (at that time) most advanced o1 model while being about 90% cheaper.

Understandably, there’s been a lot of chatter around each model’s performance and speed, with hot takes on which tool performs what tasks better. I’m deliberately not going to hypothesise despite having tried R-1 because I think it’s too early to pronounce judgements about “who did it better”.

What, to my mind, is worth talking about is R-1’s build.

An interesting thing happened when one of our senior robotics students asked me a very intriguing question: “What can I learn from the people who built R-1?”

To be able to answer that, I first had to explain to her in detail what I knew about how R-1 has been built at its core. As we progressed into the conversation, the room started to see parallels between how R-1 has been programmed to operate and what we constantly encourage our students to do, both inside and outside of class.

Here’s what we discovered:

Getting it right vs doing it fast

Reasoning models like R-1 are programmed to take a more analytical approach to problem-solving. While large language models (LLMs) focus on predicting every next word and spitting out an answer at speed, reasoning models by design take time to process a query, come up with multiple possible answers to a problem, choose the one that best fits the context within which the question is asked, and then finally, offer it to the user.

This process and the attempt to answer it accurately also involves breaking the question down into smaller components and answering each component before arriving at a larger answer. Additionally, the logic applied to arrive at a conclusion is made available to the questioner to agree or disagree with—making the process a learning experience for both the user and the tool. All of this means that success is measured in terms of accuracy in understanding the context and then offering a sensible response, not the speed at which some answer is generated.

When we discuss how to learn, even before what is to be learned, we simplify the process by breaking complex problems into smaller chunks and talking through their reasoning for an answer, so flaws in the logic can be caught and corrected in real-time.

The importance of course correction

I strongly believe that one of the biggest prerequisites for long-term success in learning and life is the ability to accept one’s mistakes without feeling the need to hide them and adopt an attitude of analysis so even a mistake can be a source of enrichment.

Transparency of thought enables that. Since reasoning models like R-1 constantly analyse and evaluate their responses due to the feedback they receive on their visible chains of thought, they are better able to self-correct and improve their accuracy.

Over time, they can self-correct during the process of reasoning instead of after the fact, making them even more reliable and suited to sensitive tasks that require a higher order of thinking.

Learning through reinforcement instead of rote

If you use LLMs often enough, they can feel a lot like a person who has memorised large volumes of data that it regurgitates at every opportunity. I heard this all my life as a student and repeat it ad nauseam as an educator—understand, don’t mug up.

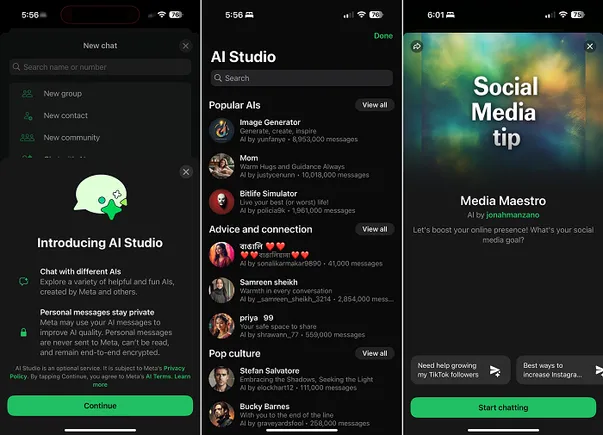

In a rudimentary way, this was DeepSeek’s approach while designing R-1. Instead of trying to improve the model’s accuracy by flooding it with more and more labelled data, it is allowed to self-improve by learning from its own past behaviour. This is achieved through Group Relative Policy Optimisation—answers are not simply correct or incorrect.

With GRPO, each new answer is compared to those in the past, and if the feedback loop tells the model that the new answer is better or more relevant to a given context than the previous one, it uses this information to teach itself. This not only makes training R-1 cheaper but also ensures that it stays a constantly learning dynamic model instead of a static one.

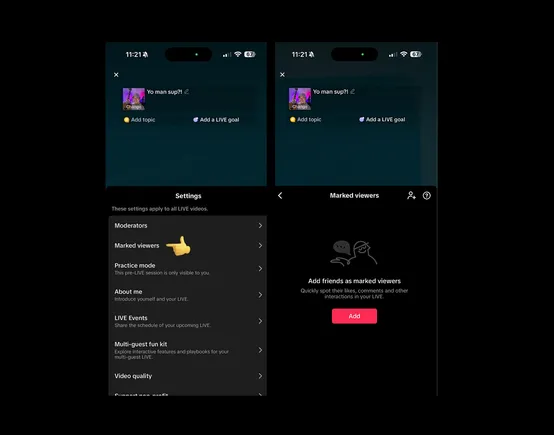

Working collaboratively

Most of us have heard the adage work smart, not hard. Since R-1 was built to outperform models that were programmed using resources and power far higher than was available to its makers, DeepSeek found a way to work smart.

The neural network of the model takes every task and assigns pieces of it to different specialised sub-models instead of using all its firepower at every step of the way. In layperson’s terms, this means picking the right person for the job so the work is equitably distributed across the neural network more efficiently, with every sub-model working on its area of expertise.

The implications of a product like R-1 in the AI ecosystem aside, all but obliterating the hegemony of massive players with unlimited resources, the more I learn about the build of R-1, the more inspired I am by the lessons its design and decision-making framework can teach all of us, but most importantly, impressionable minds of students.

Ravi Bhushan is the Founder and CEO of BrightCHAMPS.

Edited by Suman Singh

(Disclaimer: The views and opinions expressed in this article are those of the author and do not necessarily reflect the views of YourStory.)

.jpg)

%20Abstract%20Background%20112024%20SOURCE%20Amazon.jpg)