Researchers concerned to find AI models misrepresenting their “reasoning” processes

New Anthropic research shows AI models often fail to disclose reasoning shortcuts.

Remember when teachers demanded that you "show your work" in school? Some new types of AI models promise to do exactly that, but new research suggests that the "work" they show can sometimes be misleading or disconnected from the actual process used to reach the answer.

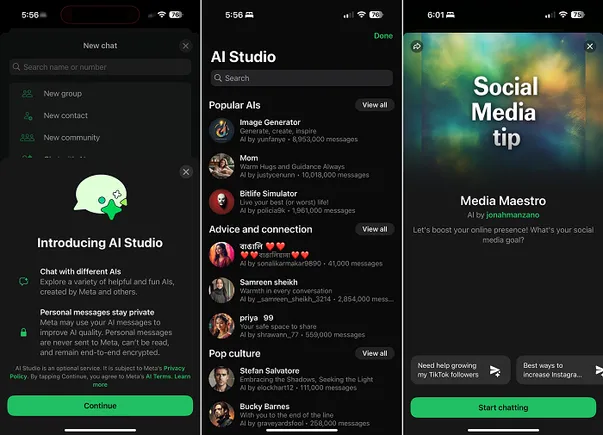

New research from Anthropic—creator of the ChatGPT-like Claude AI assistant—examines simulated reasoning (SR) models like DeepSeek's R1, and its own Claude series. In a research paper posted last week, Anthropic's Alignment Science team demonstrated that these SR models frequently fail to disclose when they've used external help or taken shortcuts, despite features designed to show their "reasoning" process.

(It's worth noting that OpenAI's o1 and o3 series SR models were excluded from this study.)

![31 Top Social Media Platforms in 2025 [+ Marketing Tips]](https://static.semrush.com/blog/uploads/media/0b/40/0b40fe7015c46ea017490203e239364a/most-popular-social-media-platforms.svg)

![[Webinar] AI Is Already Inside Your SaaS Stack — Learn How to Prevent the Next Silent Breach](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEiOWn65wd33dg2uO99NrtKbpYLfcepwOLidQDMls0HXKlA91k6HURluRA4WXgJRAZldEe1VReMQZyyYt1PgnoAn5JPpILsWlXIzmrBSs_TBoyPwO7hZrWouBg2-O3mdeoeSGY-l9_bsZB7vbpKjTSvG93zNytjxgTaMPqo9iq9Z5pGa05CJOs9uXpwHFT4/s1600/ai-cyber.jpg?#)

![How to Find Low-Competition Keywords with Semrush [Super Easy]](https://static.semrush.com/blog/uploads/media/73/62/7362f16fb9e460b6d58ccc09b4a048b6/how-to-find-low-competition-keywords-sm.png)